Introduction

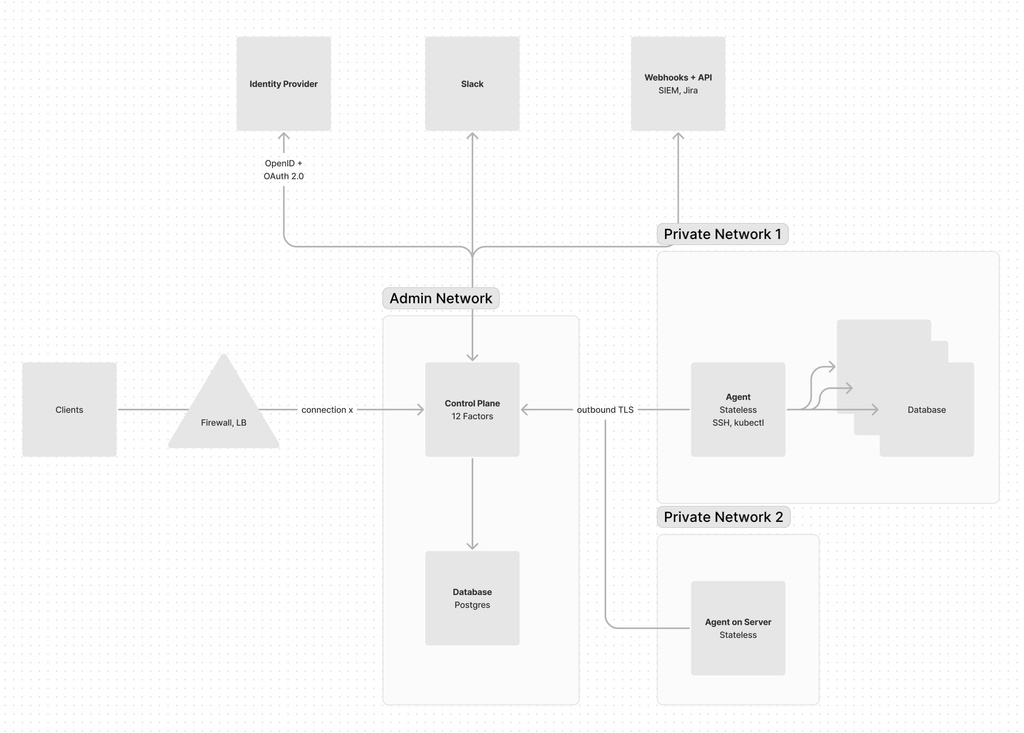

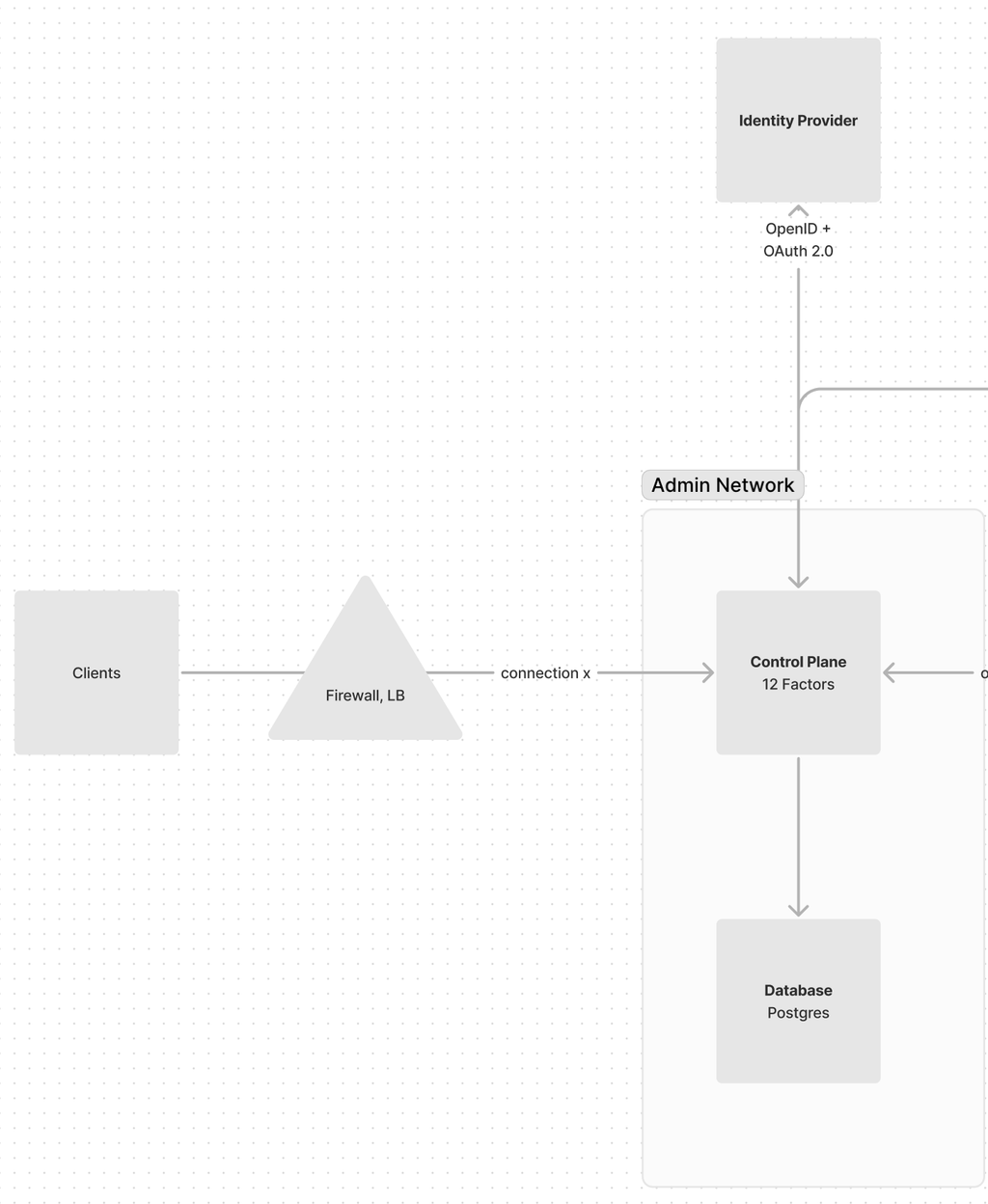

Hoop is composed of three main components in order to function properly.

- Gateway

The system exposes an API and uses the gRPC protocol to allow clients to connect to it. The gateway serves as the central component responsible for configuring, storing, and routing packets between clients.

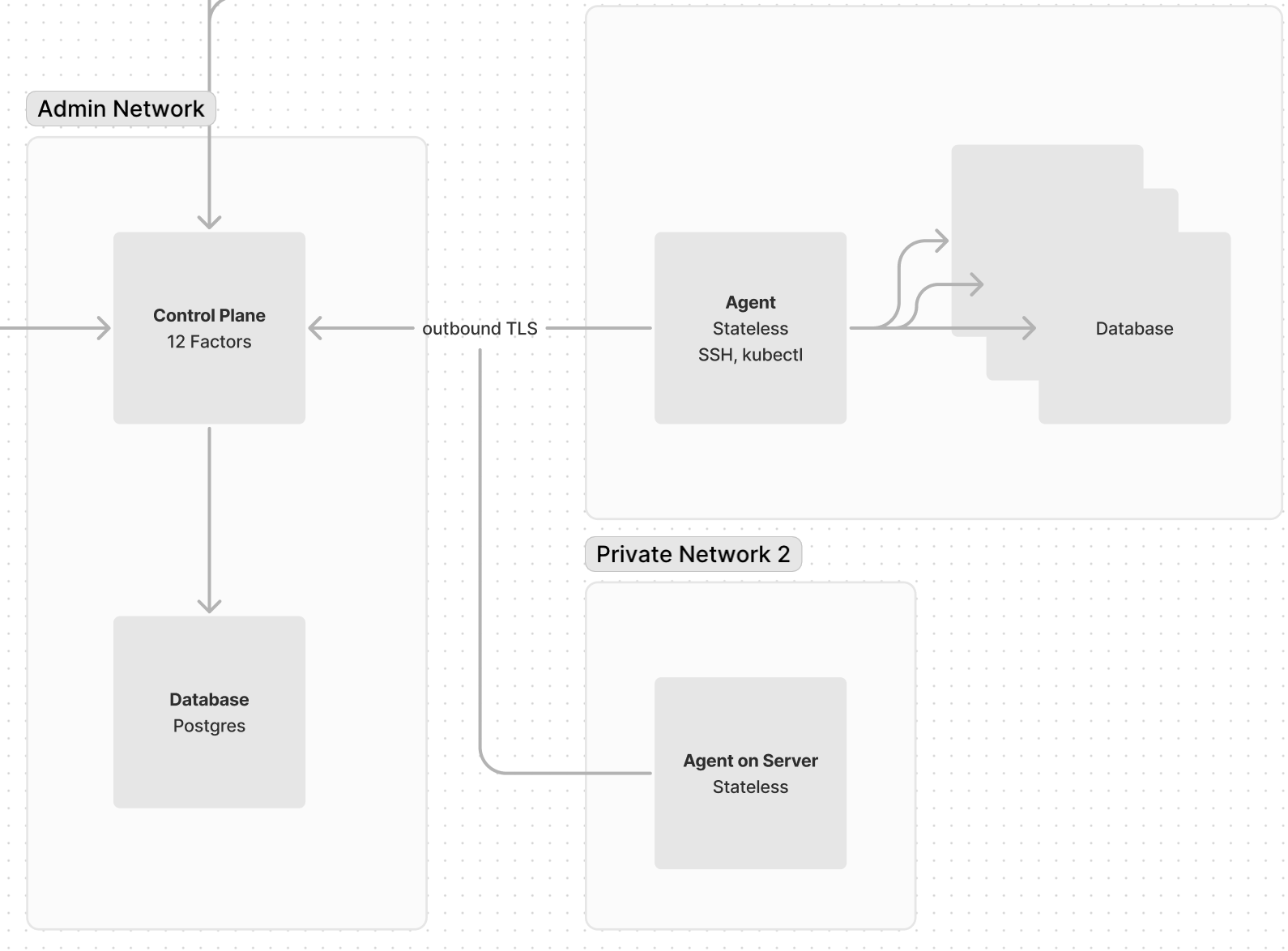

- Agents

The agent is responsible for connecting your private services. It acts as a client, connecting with the gateway and securely exchanging packets via gRPC.

See  Setup.

Setup.

- Clients

Users interact with resources called connections through clients. The main clients include the command line and the webapp.

See  Connect & Manage.

Connect & Manage.

Gateway

To start the gateway, use the command

hoop start gateway. Below is a list of the configurations:ENVIRONMENT | REQUIRED | DESCRIPTION | DEFAULT VALUE |

POSTGRES_DB_URI | yes | The postgres connection string to connect in the database. | |

API_URL | yes | API URL address (identity provider) | |

IDP_URI | yes | OIDC & Oauth2 configuration in URI format: <scheme>://<client-id>:<client-secret>@<issuer-host>?<options>= | |

IDP_AUDIENCE | no | Identity Provider Audience (Oauth2) | |

IDP_ISSUER (DEPRECATED) | no | OIDC Issuer URL. Adding a query string containing _userinfo=1 will force the gateway to validate the access token using this endpoint. | |

IDP_CLIENT_ID (DEPRECATED) | no | Oauth2 client id | |

IDP_CLIENT_SECRET (DEPRECATED) | no | Oauth2 client secret | |

IDP_CUSTOM_SCOPES (DEPRECATED) | no | Oauth2 additional scopes | |

GRPC_URL | no | The gRPC URL to advertise to clients. | {API_URL}:8443 |

STATIC_UI_PATH | no | The path where the UI assets resides | /app/ui/public |

PLUGIN_AUDIT_PATH | no | The path where the temporary sessions are stored | /opt/hoop/sessions |

PLUGIN_INDEX_PATH | no | The path where the temporary indexes are stored | /opt/hoop/indexes |

GIN_MODE | no | Turn on (debug) logging of routes | release |

LOG_ENCODING | no | The encoding of output logs (console | json |

LOG_LEVEL | no | The verbosity of logs (debug,info,warn,error) | info |

LOG_GRPC | no | "1" enables logging gRPC protocol | |

ORG_MULTI_TENANT | no | Enable organization multi-tenancy | |

ASK_AI_CREDENTIALS | no | The ChatGPT credentials in URL format: <scheme>://_:<apikey>@<api-host> | |

GOOGLE_APPLICATION_CREDENTIALS_JSON | no | GCP DLP credentials | |

WEBHOOK_APPKEY | no | The application key to send messages to the webhook provider. | |

ADMIN_USERNAME | no | Changes the name of the group to act as admin | admin |

The

IDP_URI is a URI format that enables the configuration of the identity provider:shell<scheme>://<client-id>:<client-secret>@<issuer-host>?<options>=

CONFIG | REQUIRED | DESCRIPTION |

<scheme> | yes | The protocol of the OIDC issuer URL: http or https |

<client-id> | yes | |

<client-secret> | yes | |

<issuer-host> | yes | The host path part of the OIDC Issuer URL. |

scopes | no | Additional Oauth2 scopes to append to the request. Default values are openid, profile and email. |

groupsclaim | no | The name of the claim to consider as configuration to propagate groups. Default to https://app.hoop.dev/groups |

_userinfo | no | When this option is set to 1 it forces to authenticate using the userinfo endpoint. |

Example Configuration

shellIDP_URI=https://ahph:caiJoah@auth.hoop.dev/?scopes=groups,phone&groupsclaim=groups

In the SSO section, there are instructions on how to configure an application on your identity provider.

Storage

Hoop uses postgres as the backend storage of all data in the system. The user that connects in the database must be a superuser or have the

CREATEROLE permission. The command below creates a database and default user required when starting the gateway.sqlCREATE DATABASE hoopdb; CREATE USER hoopuser WITH ENCRYPTED PASSWORD 'my-secure-password' CREATEROLE; -- switch to the created database \c hoopdb GRANT ALL PRIVILEGES ON DATABASE hoopdb TO hoopuser; GRANT ALL PRIVILEGES ON SCHEMA public to hoopuser; GRANT ALL PRIVILEGES ON ALL TABLES IN SCHEMA public TO hoopuser;

In case of using a password with special characters, make sure to url encode it properly when setting the connection string.

Now, use these values to assemble the configuration for POSTGRES_DB_URI.

POSTGRES_DB_URI=postgres://hoopuser:<passwd>@<db-host>:5432/hoopdb

Database User Permissions

When the gateway starts, it automatically migrates tables, views, and functions. The main user must have the

CREATEROLE permission and full privileges for the database and its default schema: public.Session Blobs

The environment variable configuration

PLUGIN_AUDIT_PATH and PLUGIN_INDEX_PATH contains the audit session data that is in transit. This ensures that the blobs stored in the filesystem persist until the session is flushed to the underlying system.We strongly recommend mounting a persistent volume in those paths.

Data Masking (Data Loss Prevention)

Google Data Loss Prevention redacts the content of connections on the fly. To use it, you need a service account with the role

roles/dlp.user. Use the configuration GOOGLE_APPLICATION_CREDENTIALS_JSON to set the service account.shellexport GOOGLE_APPLICATION_CREDENTIALS_JSON='{"type":"service_account",...}'

HTTP Server (:8009)

The gateway exposes a RESTful HTTP API for clients to consume. By default, it binds to port 8009. Additionally, it provides the webapp application as a single page application on the same port, but under a different path.

{API_URL}/api/*- Rest HTTP API routes

{API_URL}/*- Webapp routes

gRPC Server (:8010)

The gRPC gateway provides a bidirectional connection between clients, allowing for the implementation of complex exchanges using multiple protocols. By default, it binds to port 8010.

Runtime Images

To start a gateway instance, we recommend using the

hoophq/hoop image. It contains all the necessary dependencies and runs alongside them.Scalability

1. Capacity of a Single Instance

It's important to understand that the system's capacity scales slower than linearly with the volume of data processed and the number of users connected. A single instance is capable of handling thousands of connections simultaneously. This characteristic is vital when considering the scalability strategy of the system.

2. Vertical Scaling: The Preferred Option

Given the high capacity of a single instance, vertical scaling emerges as the preferred approach for several reasons:

- Ease of Operation: Operating a system with fewer, more powerful instances is generally simpler than managing a large number of smaller instances, even when using orchestration systems like Kubernetes.

- Cloud-Native Deployment Benefits: Hoop is cloud-native, meaning it can quickly recover from issues. For example, in a Kubernetes environment, if a container instance faces problems, it will be restarted in seconds. The primary impact on users would be a temporary disconnection, which would occur in any system facing similar issues.

- High Capacity Utilization: Because a single instance can handle a large number of connections, vertical scaling allows us to fully utilize the capacity of each instance.

3. Horizontal Scaling: Challenges and Limitations

Horizontal scaling, while a common strategy, presents specific challenges in Hoop’s context:

- Limitations with gRPC Connections: A significant portion of our load is managed within live gRPC connections, which are not well-suited for proxy load balancing. This limitation reduces the effectiveness of horizontal scaling.

- Uneven Load Distribution: Consider a scenario where 10 users are connected, but 2 of them exhibit extreme usage patterns and end up on the same server instance, while the remaining 8 are distributed to another instance. The instance with the 2 high-usage connections will face a disproportionately higher load.

4. Impact of Long-Running Connections

The nature of user interaction further complicates the distribution of load in horizontal scaling:

- Long-Session Concentration: Users often maintain connections for extended periods. For instance, if 10 users connect, with 3 of them leaving their sessions open for several days, while others disconnect after a short duration, the longer sessions can concentrate on fewer servers based on round-robin distribution. This concentration can lead to uneven load distribution and potential strain on specific servers.

Summary

In conclusion, while horizontal scaling is a common approach, our system's characteristics and user interaction patterns make vertical scaling a more effective and manageable option. This strategy leverages the high capacity of individual instances and aligns well with the cloud-native deployment advantages, ensuring rapid recovery and minimal user impact in the event of issues.

Agent

ENVIRONMENT | REQUIRED | DEFAULT VALUE | DESCRIPTION |

HOOP_KEY | yes | The dsn key secret to connect in the gateway. | |

LOG_ENCODING | no | "json" | The log encoding to output logs: json,console |

LOG_LEVEL | no | "INFO" | The level of logs: DEBUG,INFO,WARN,ERROR |

LOG_GRPC | no | 0 | Enables logging gRPC: 0,1,2 |

TLS Connection

The agent client is required to connect via TLS. This means that even if the gateway is using an insecure configuration, clients will not be able to connect via gRPC due to this strict requirement.

shell$ hoop start agent {... grpc_server=use.hoop.dev:8443, tls=true, strict-tls=true ..."}

Debugging

To start the agent in debug mode, either set the option

-debug or set the environment variable LOG_LEVEL=DEBUG. For debugging gRPC connection traffic logs, use the option -debug-grpc or set the environment variable LOG_GRPC=1.shell$ hoop start agent --debug --debug-grpc

Scalability

The Agent component of the system shares the same characteristics and scaling strategies as discussed earlier in the Gateway section. As with the Gateway, the Agent component's scalability and capacity are key factors in its design and deployment strategy. Here's a brief overview of how these aspects apply to the Agent component:

- High Capacity of a Single Instance: Like the Gateway, a single instance of the Agent component can manage thousands of connections. This high capacity plays a crucial role in determining the most effective scaling strategy.

- Vertical Scaling as the Preferred Approach: Reflecting the main system's strategy, vertical scaling is also preferred for the Agent component. This approach is favored due to:

- Simplified operational management.

- Efficient utilization of the high capacity of a single instance.

- Advantages offered by cloud-native deployment, such as rapid recovery from issues.

- Challenges in Horizontal Scaling: The Agent component encounters similar challenges in horizontal scaling as the main system. These include:

- Limited effectiveness due to the nature of live gRPC connections.

- Potential for uneven load distribution among instances, especially in scenarios with varying usage patterns among users.

- Considerations for Long-Running Connections: The Agent component also needs to account for the impact of long-running connections on load distribution, mirroring the considerations outlined earlier.

Summary

The Agent component's scaling and capacity characteristics align closely with those of the main system, emphasizing the preference for vertical scaling due to operational simplicity, effective utilization of capacity, and the benefits of cloud-native deployment. The challenges and considerations in horizontal scaling, particularly in relation to live gRPC connections and the impact of long-running sessions, further reinforce this strategy.